Related Posts

Subscribe via Email

Subscribe to our blog to get insights sent directly to your inbox.

I’ve always been a believer in the saying, “If you can measure it, you can manage it!” Metrics seem to be first thing security professionals think of, but typically the last thing to be implemented, and understandably so because you need a process in place before you can start measuring.

I propose a change in how metrics are perceived. Most people explicitly measure the positive, not the negative. For example, it’s easy for executives to agree on the success of patching when you report that server patching is 80% effective. But the inverse of that metric equates to 20% of servers that aren’t patched. Regardless of the percentage reported, the percentage patched in the negative solicits a much different perspective on that metric. In other words, an executive who see a positive metric rarely expects to see 100%; however, when a negative metric is presented there’s pressure to move that to 0%.

The second thing I propose is that metrics should be tied to business objectives. Metrics should articulate strategic alignment with a business driver. As a security department you’ll have much better success at budget negotiation time when you can clearly demonstrate that security initiatives support the overall business strategy.

The third thing I propose is to purposefully structure the context of the metric. This means you need to know the business objectives, high-value services or assets, critical security controls, critical business risks, or disruptive events that could impact the brand value of the company or the hard-earned revenue stream. Once you’ve considered these areas, you need to think about how the metric is going to be composed (i.e., is it projects, tasks, performance goals, fiscal investment?). You should be prepared to explain how the metric is composed and why. If there’s any doubt to the accuracy or completeness of the metric, you’ll lose credibility.

For consistency in the metric examples, the scenario we’ll use is the outsourcing of security operations. Remember, these are strategic metrics, and if measured correctly will lead to good conversation.

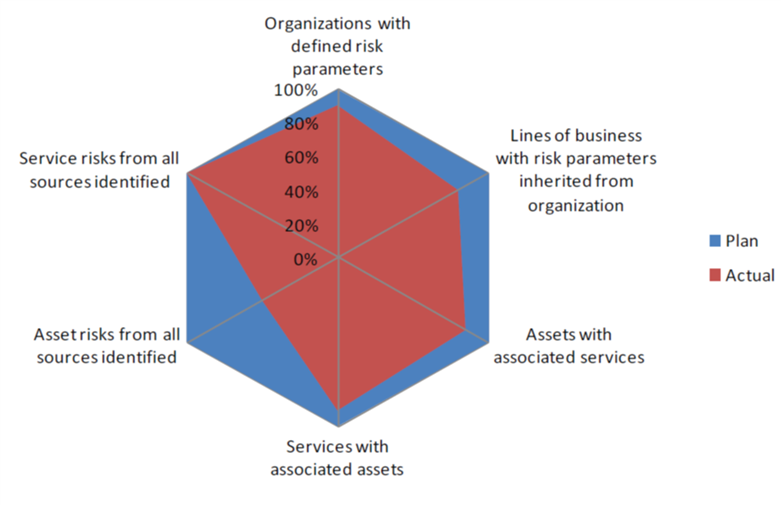

Top 10 Metrics (from the folks at the Carnegie Mellon University CERT)

Justin (he/him) is the founder and CEO of NuHarbor Security, where he continues to advance modern integrated cybersecurity services. He has over 20 years of cybersecurity experience, much of it earned while leading security efforts for multinational corporations, most recently serving as global CISO at Keurig Green Mountain Coffee. Justin serves multiple local organizations in the public interest, including his board membership at Champlain College.

Subscribe to our blog to get insights sent directly to your inbox.